Edge TPU vs GPU: Which Accelerator Should You Choose?

2/3/2026 · AI Hardware · 7 min

TL;DR

- Edge TPU excels at low power inferencing for specific models and is ideal for embedded devices that need predictable low latency.

- GPU offers broader flexibility and higher raw throughput for a wide range of models, and is best for desktop inferencing, development, and larger workloads.

- Best picks by use case:

- IoT sensors and smart home: Edge TPU or similar ASIC accelerators for battery friendly always on inference.

- Mobile robotics and drones: Edge TPU when power is constrained; GPU if you need bigger models and more on device processing.

- Desktop AI workstation: GPU for model experimentation, profiling, and heavier inferencing.

What they are

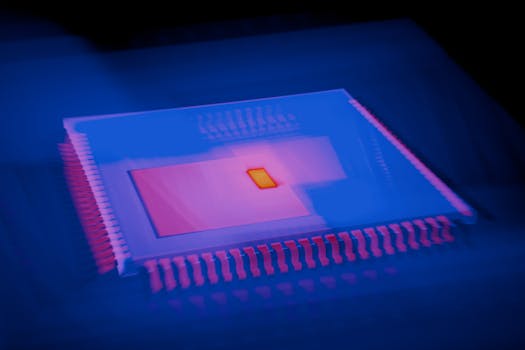

- Edge TPU: application specific accelerators optimized for quantized inferencing, small footprint, low power, and predictable latency. Vendors provide modules, USB sticks, and SoC integrations.

- GPU: general purpose parallel processors capable of running many data types and model sizes. GPUs support training and inference with mature driver and framework ecosystems.

Performance and latency

- Edge TPUs deliver consistent low latency for supported models and operations. They shine on single inference requests and low batch workloads.

- GPUs scale well with model size and batch size. They reach higher throughput for large models and parallel workloads but may consume more power and introduce higher thermal limits.

- If your target is sub 50 ms latency on a battery powered device, Edge TPU class devices are often easier to tune.

Power and thermals

- Edge TPU solutions typically draw single digit watts or less for modules and USB accelerators. This enables fanless designs and long battery life.

- GPUs range from mobile variants at tens of watts to desktop GPUs at hundreds of watts. Sustained performance depends on cooling and power delivery.

Model compatibility and conversions

- Edge TPUs usually require quantized models, commonly int8, and a conversion step. Not all layers or ops are supported, so models may need rework or operator replacements.

- GPUs run standard frameworks like PyTorch and TensorFlow with minimal conversion. Mixed precision modes such as FP16 or BF16 boost throughput with good compatibility.

Development ecosystem

- Edge TPU: vendor SDKs, preoptimized model libraries, and deployment tools that simplify shipping to many devices. This reduces field deployment complexity but can limit model choices.

- GPU: rich tooling for training, profiling, debugging, and model optimization. Better for research, iteration, and custom layers.

Cost and deployment at scale

- Edge TPU modules and USB accelerators are cost effective per unit, and their low power can cut operating expenses at scale.

- GPUs have higher unit and operational costs. For server side inferencing, GPUs still win when throughput per device matters.

When to choose which

- Choose Edge TPU if you need ultra low power, small form factor, and predictable latency, and you can quantize or adapt your model to the supported ops.

- Choose GPU if you need flexible model support, larger models, higher peak throughput, or if you plan to iterate frequently and require advanced profiling.

Buying checklist

- Power budget and thermal limits for your device.

- Supported model ops and quantization requirements.

- SDK and runtime maturity and compatibility with your stack.

- Form factor and interface: USB, M.2, PCIe, or integrated module.

- Cost per deployed unit and operating cost for the expected scale.

- Throughput and latency targets under realistic workloads.

Bottom line

Edge TPUs are the right pick for constrained devices and mass deployment when power and predictable latency matter most. GPUs remain the best all round choice for flexible development, heavy inferencing, and situations where power and size are less constrained. Choose based on your target latency, power envelope, model compatibility, and long term deployment scale.

Found this helpful? Check our curated picks on the home page.